On July 17th, 2024 Frederik Dennig successfully defended his Ph.D. thesis. Read more about “Measure-Driven Visual Analytics of Categorical Data”.

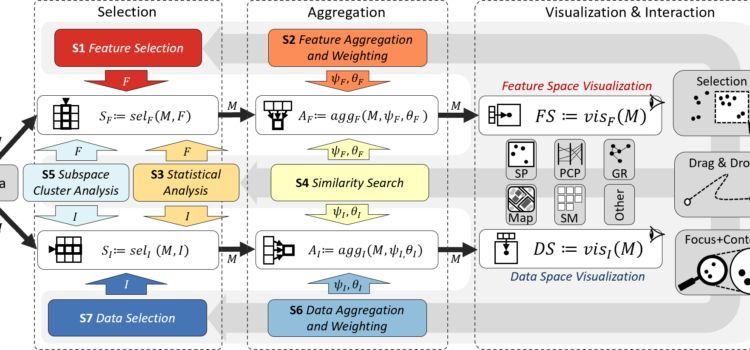

Measure-Driven Visual Analytics of Categorical Data

On July 17th, 2024 Frederik Dennig successfully defended his Ph.D. thesis. Read more about “Measure-Driven Visual Analytics of Categorical Data”.

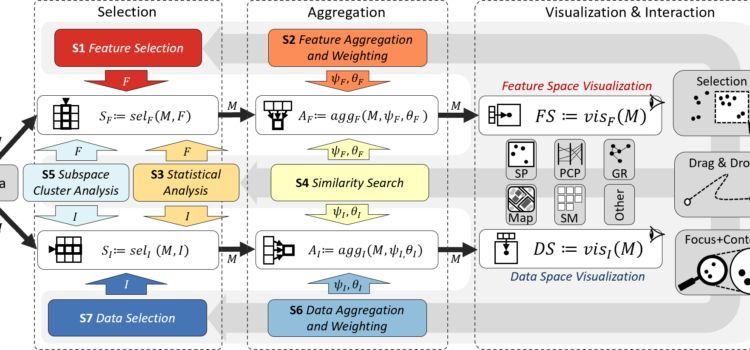

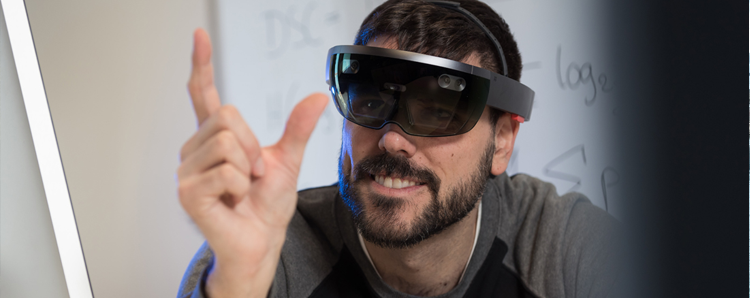

Since August 2018, Michael Sedlmair is a researcher at the Visualization Research Center at the University of Stuttgart. As Assistant Professor his work focuses on the development of Augmented Reality (AR) and Virtual Reality (VR) solutions, Data Visualization, and Human-Computer Interaction (HCI). In an interview he answered some questions regarding his current and future research activities and his idea of how our future might look like.

On Wednesday September 20th, Jakob Karolus and me ran a Physical Computing and Biosensing Hackathon within the context of the AffecTech training week in Lancaster. In this day-long event, 15 AffecTech Ph.D. students with backgrounds ranging from Clinical Psychology to Human-Computer Interaction worked in multidisciplinary groups to create systems that support people coping with emotional and affective conditions.

Each year in December, senior Human-Computer Interaction researchers meet to discuss the articles submitted to the Conference on Human Factors in Computing Systems (or in short CHI). CHI is the most important venue for research on Human-Computer Interaction and covers a broad range of research from understanding people, via novel interaction techniques to visualization. This year, over 300 researchers came to Montreal and discussed the articles submitted to CHI 2018. With Harald Reiterer and me, two associate chairs from Konstanz and Stuttgart participated in the meeting. CHI only accepts about 25% of the submissions after a rigorous peer review process. With 16 accepted publications, the groups participating in SFB-TRR 161 from Konstanz, Tübingen, and Stuttgart have been very successful and are happy about how well their submissions have been received.

This year, the 16th International Conference for Mobile and Ubiquitous Multimedia (MUM 2017) was held at the University of Stuttgart. Researchers from all around the world met from November 26th to 29th to present and discuss their latest work. Its single track program featured presentations about several topics from the cutting edge of research in Human Computer Interaction, along an art exposition, a Doctoral Consortium, posters sessions, workshops and tutorials.

In May we had the pleasure to attend this year’s CHI conference in Denver, Colorado, USA. ACM Conference on Human Factors in Computing Systems (CHI) is the top conference for research on Human-Computer Interaction. It brings together thousands of international top researchers from academia as well as from industry. Several members of the University of Konstanz and the SFB-TRR 161 contributed in various ways to the conference.

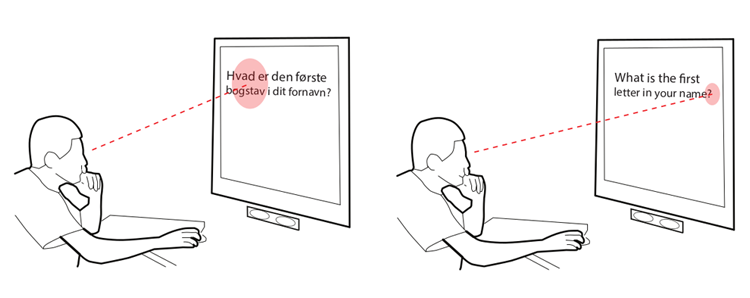

With an increasingly globalized world, the language barrier problem becomes more prominent. And thus, inhibits proper interaction between not only humans but also information interfaces in the respective country. Navigating an interface in an unfamiliar language can be challenging and cumbersome. More often than not, poorly accessible language menu are of little to no help. Implicitly inferring a user’s language proficiency helps relieving customer frustration and boosts the user experience of the system. The following image shows a sketch of such a language-aware interface.

Spatial Memory is an essential part of our everyday life: There is no need for a map to find the way to our best friend’s home, we know where to find milk cartons in our preferred supermarket, and most of the time we remember where we placed the remote control of our TV. In a similar way, spatial memory and technology can be also combined: The desktop of our laptop represents a physical desktop and like in a physical environment, documents and tools can be placed at different positions. Navigating to them is easy when done regularly.

In cooperation with the “GI-Fachgruppe Be-Greifbare Interaktion”, the HCI group at the University of Stuttgart organized the annual Inventors-workshop with the topic: Using Physiological Sensing for Embodied Interaction. In the workshop, we introduced the basic concepts for sensing of human muscle activity accompanied by a refreshing Keynote from Leonardo Gizzi. We provided a basic explanation of how physiological sensing works, introduced how it can be technically realized, and showed different applications and usage scenarios.

“What we aim for in the end is some sort of mechanism, that tells us, whether the users understood, what they were looking at.”

Jakob Karolus is a researcher at the Institute for Visualization and Interactive Systems at the University of Stuttgart working in the field of Human-Computer Interaction. Within the project SFB-TRR 161 “Quantitative Methods for Visual Computing” he wants to find out how different visualizations influence the eyemovement patterns of people.