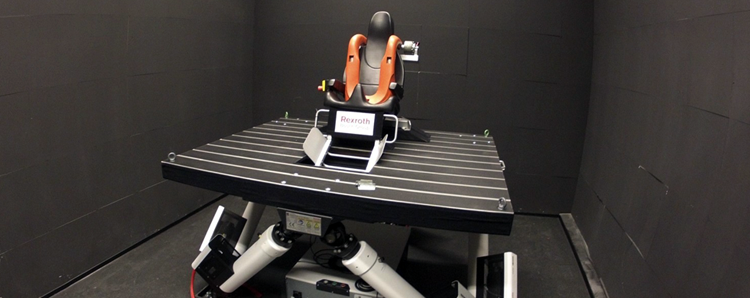

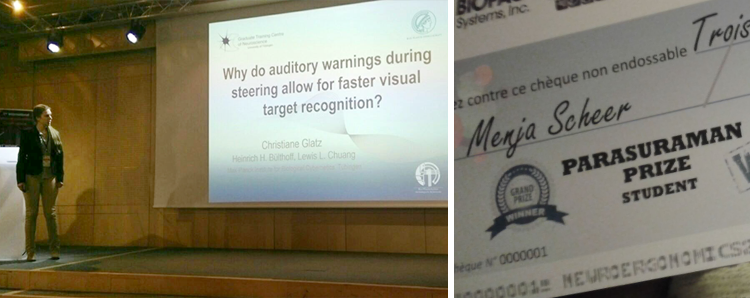

When designing technical solutions, developers are aware that the attentional capacity of their users is limited. Thus, it is an open question on how our limited attention can be cued and redirected by warning systems. In a recent study, Lewis Chuang and Christiane Glatz tested different warning sounds at the Max Planck Institute for Biological Cybernetics in Tübingen. The scientists found out that certain sounds redirected our attention away from an ongoing task better than others.

How to Cue and Redirect our Attention by Warning Systems