In February our members gathered in Stuttgart for the 2nd SFB-TRR 161 Research Hackathon. This year’s motto: Multimodal and Collaborative Data in HCI.

Our 2nd Research Hackathon – Multimodal and Collaborative Data in HCI

In February our members gathered in Stuttgart for the 2nd SFB-TRR 161 Research Hackathon. This year’s motto: Multimodal and Collaborative Data in HCI.

I was given the opportunity to take part in a research stay at the Monash University in Australia. Read more about it here!

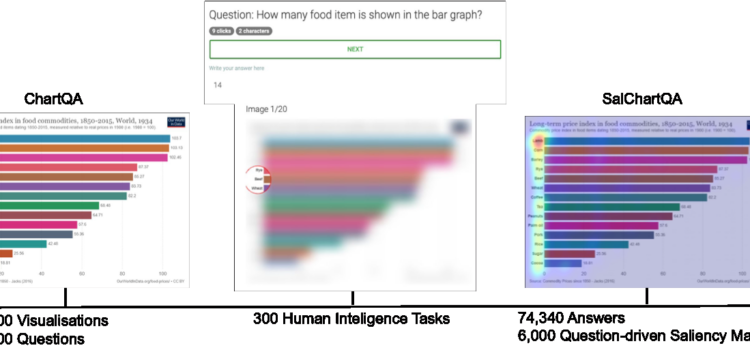

Yao Wang successfully defended his PhD thesis on November 5th, 2024. For our blog, he summarizes his research on understanding and predicting human visual attention.

In October 2023, Fiona Draxler from LMU, Munich successfully defended her PhD thesis. Read more about it!

During my six-month research stay at Carnegie Mellon University’s Human-Computer Interaction Institute (HCII) in Pittsburgh, USA, I had the opportunity to be part of Prof. Dr. David Lindlbauer’s Augmented Perception Lab. Working closely with David and his fantastic team was an incredibly rewarding experience that I truly would not want to have missed.

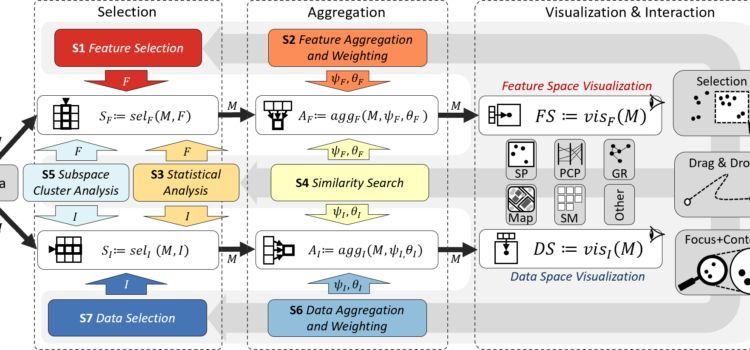

On July 17th, 2024 Frederik Dennig successfully defended his Ph.D. thesis. Read more about “Measure-Driven Visual Analytics of Categorical Data”.

On the first of July this year I successfully defended my PhD Thesis with the title Quasi Continuous Level Monte Carlo Method (1) and I am happy to share my work on the SFB-TRR 161 blog.

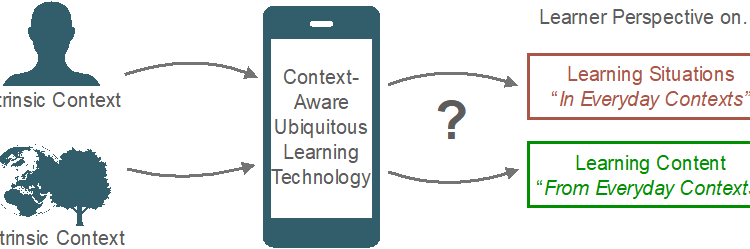

From January 30 to February 1, 2024, a group of SFB-TRR 161 researchers gathered in Stuttgart for our first hackathon. Their aim: developing visualization tools for eye-tracking data analysis with a focus on dimensionality reduction techniques.

As a doctoral researcher of project A03 of the SFB-TRR 161, I am researching “Quantification of Visual Explainability.” During my research stay from February to May 2023, I joined the Visualization and Graphics (VIG) group at the Utrecht University.

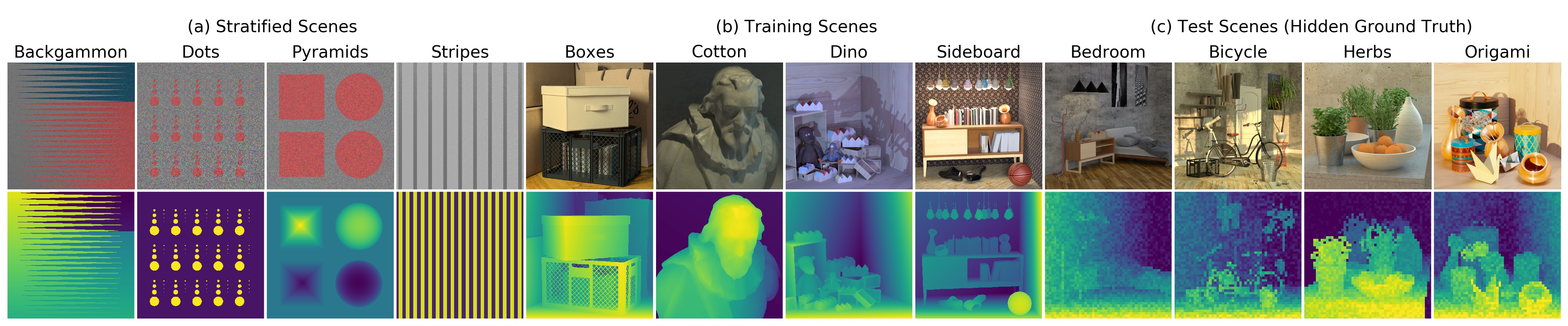

I’m delighted to share that I’ve successfully defended my Ph.D. thesis titled “Variational 3D Reconstruction of Non-Lambertian Scenes Using Light Fields”. Depth estimation from multiple cameras is the task of estimating the distance between the individual cameras and the scene