In February our members gathered in Stuttgart for the 2nd SFB-TRR 161 Research Hackathon. This year’s motto: Multimodal and Collaborative Data in HCI.

Our 2nd Research Hackathon – Multimodal and Collaborative Data in HCI

In February our members gathered in Stuttgart for the 2nd SFB-TRR 161 Research Hackathon. This year’s motto: Multimodal and Collaborative Data in HCI.

Im April 2025 haben 3 Praktikanten ihre BOGY Woche an der Universität Konstanz verbracht. Dabei waren u.a. Illia Kovalchuk und David Pampel. Hier ist ein Bericht!

Am Girls’ Day 2025 besuchten insgesamt zehn Mädchen die Workshops des SFB-TRR 161 in Konstanz und Stuttgart.

Vom 24. bis 28. Februar 2025 hatten wir, die BOGY Gruppe, die Gelegenheit, ein abwechslungsreiches und lehrreiches Praktikum am Visualisierungsinstitut der Universität Stuttgart (VISUS) zu absolvieren.

I was given the opportunity to take part in a research stay at the Monash University in Australia. Read more about it here!

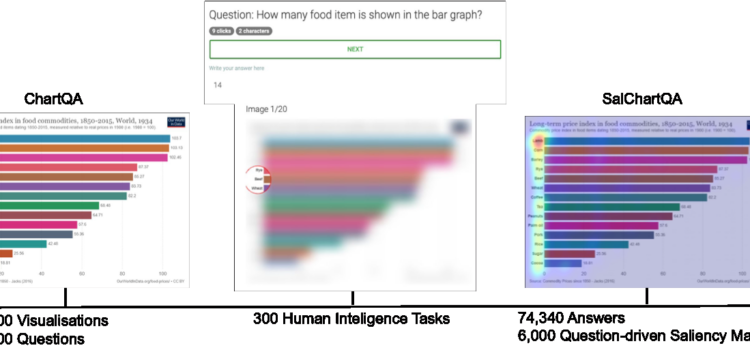

Yao Wang successfully defended his PhD thesis on November 5th, 2024. For our blog, he summarizes his research on understanding and predicting human visual attention.

In October 2023, Fiona Draxler from LMU, Munich successfully defended her PhD thesis. Read more about it!

During my six-month research stay at Carnegie Mellon University’s Human-Computer Interaction Institute (HCII) in Pittsburgh, USA, I had the opportunity to be part of Prof. Dr. David Lindlbauer’s Augmented Perception Lab. Working closely with David and his fantastic team was an incredibly rewarding experience that I truly would not want to have missed.

Members of various SFB-TRR 161 projects came together and offered a Unity Crash Course at the University of Konstanz in October 2024.

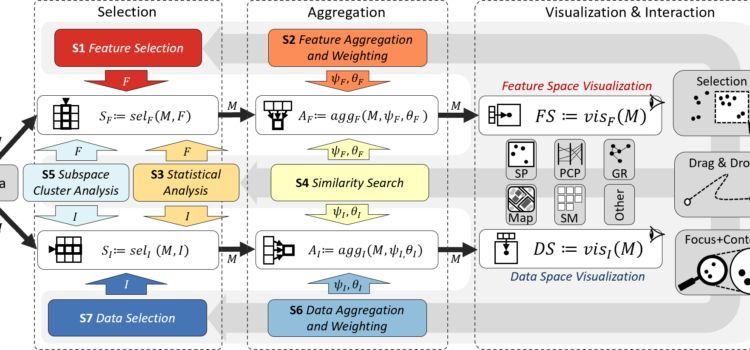

On July 17th, 2024 Frederik Dennig successfully defended his Ph.D. thesis. Read more about “Measure-Driven Visual Analytics of Categorical Data”.