Vom 08. bis 12. November 2021 absolvierte Nicholas Coledan ein Praktikum am Stuttgarter Standort des SFB-TRR 161. Auf unserem Blog erzählt er von seinen Eindrücken.

Mein BOGY-Praktikum an der Universität Stuttgart

Vom 08. bis 12. November 2021 absolvierte Nicholas Coledan ein Praktikum am Stuttgarter Standort des SFB-TRR 161. Auf unserem Blog erzählt er von seinen Eindrücken.

Der Girl’s Day war dieses Jahr ein Gemeinschaftsprojekt vom SFB-TRR 161 und dem SFB1313, und im speziellen eine Kooperation der Uni Konstanz und der Uni Stuttgart. Insgesamt bildeten wir ein 8-köpfiges Team, dass sich dieses Jahr der Herausforderung stellen musste den Girl’s Day online zu gestalten.

Malte Eggers, Simon Kloos, Nathanael Schweizer und Barbara Zimmermann berichten über ihre Zeit als BOGY-Praktikanten im SFB-TRR 161.

In der letzten Woche vor den Osterferien besuchte ich den Lehrstuhl Schreiber an der Universität Konstanz, um dort mein BOGY-Praktikum abzuhalten. Vielen Dank nochmal an meine beiden Betreuer und ihre Kollegen für diese spannende Woche!

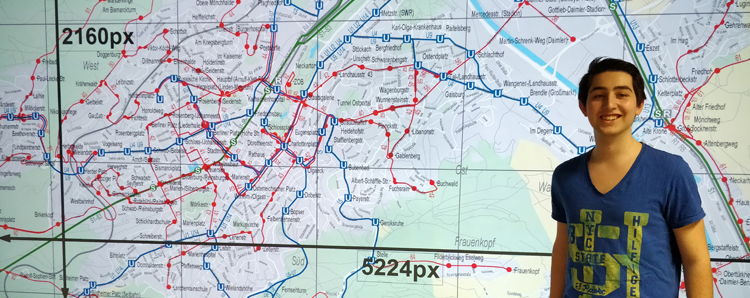

In der Woche vom 25.2 bis zum 1.3 habe ich an der Universität Konstanz, im Bereich Data Analysis & Visualization mein BOGY-Praktikum absolviert. Ich bin der Meinung, dass dieses Praktikum mir sehr viel gebracht hat, da ich bestätigt wurde, dass ich in der Welt der Informatik gut aufgehoben bin. Vielen Dank für die tolle Woche!

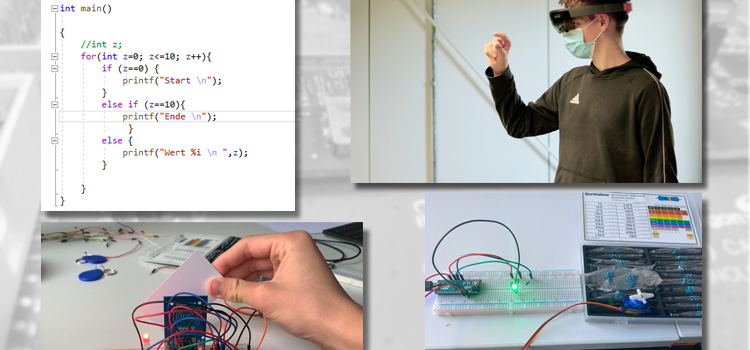

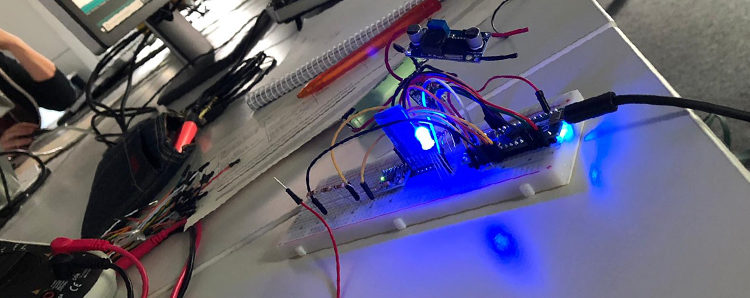

In der Woche vor den Faschingsferien haben wir unser BOGY-Praktikum an der Universität Stuttgart im Sonderforschungsbereich SFB-TRR 161 „Quantifizierung und Visual Computing“ absolviert. Während dieser Zeit wurden uns zahlreiche Einblicke in die verschiedenen Aufgabenbereiche des VISUS sowie die Besonderheiten der Arbeit als wissenschaftlicher Mitarbeiter einer Universität gewährt.

Kurz vor den Herbstferien waren wir am Visualisierungsinstitut der Universität Stuttgart für ein einwöchiges Praktikum zu Besuch. Dabei haben wir viele abwechslungsreiche und vielfältige Aufgaben bearbeitet. Durch praktisches und eigenständiges Arbeiten konnten wir viele neue Erfahrungen im Bereich Informatik sammeln. Außerdem haben wir viel über die Abläufe an einer Universität erfahren.

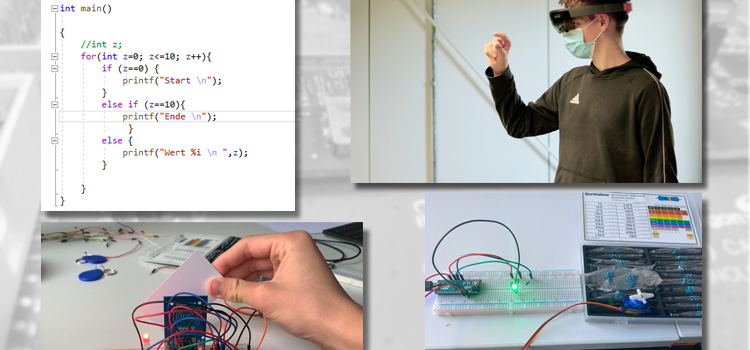

Mein BOGY-Praktikum begann in gewissem Maße zwei Wochen vor dem offiziellen BOGY-Anfang. Meine Betreuerin Anna Alperovich von der Universität Konstanz (Arbeitsgruppe Computer Vision and Image Analysis unter der Leitung von Prof. Goldlücke) an der wollte mich in der Tat mit dem Funktionieren und der Verwendung künstlicher neuronaler Netze vertraut machen.

Mit Hilfe von VR-Ferngläsern ist das zur Zeit auf der Konstanzer Insel Mainau in der Ausstellung „Vom Bodensee nach Afrika – mit ICARUS auf Langstrecke“ möglich! An verschiedenen Stationen kann man hier die Entwicklungen in der Tierbeobachtung nachvollziehen und sich interaktiv über aktuelle Forschungsergebnisse informieren.

Diese Woche startete die MS Wissenschaft ihre Tour in Berlin-Mitte. Mit an Bord des Ausstellungsschiffes ist ein Touch-Tisch der Universität Stuttgart und Universität Konstanz, an dem man erfahren kann, wie der nachhaltige Fortschritt durch Visual Computing unsere zukünftige Arbeitswelt beeinflusst.