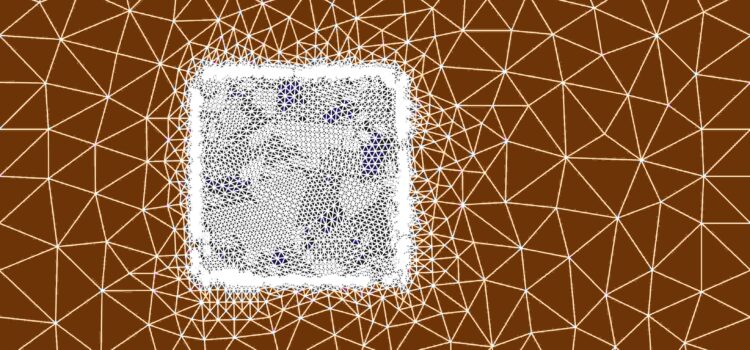

On the first of July this year I successfully defended my PhD Thesis with the title Quasi Continuous Level Monte Carlo Method (1) and I am happy to share my work on the SFB-TRR 161 blog.

Quasi Continuous Level Monte Carlo Method

On the first of July this year I successfully defended my PhD Thesis with the title Quasi Continuous Level Monte Carlo Method (1) and I am happy to share my work on the SFB-TRR 161 blog.

Ein Programmierkurs für Schülerinnen fand vom 22. bis 24. Juli 2024 an der Universität Konstanz statt. Angeleitet von Thomas Ningelgen lernten die Mädchen das Programmieren mit dem Editor Processing.

Read about the third edition of the workshop Women* in Computing that took place on July 4, 2024 in Munich.

From May to July 2023, doctoral researcher Katrin Angerbauer went to London for her research stay abroad. On our blog, she writes about her experience of going abroad as a disabled researcher.

From January 30 to February 1, 2024, a group of SFB-TRR 161 researchers gathered in Stuttgart for our first hackathon. Their aim: developing visualization tools for eye-tracking data analysis with a focus on dimensionality reduction techniques.

From April 10 to 12, 2024, our doctoral students gathered at Burg Rothenfels for their annual doctoral retreat. In a relaxed atmosphere, they discussed their research and ideas for collaborations. The doctoral retreat this year was held from April 10

Im März verbrachte Flavio sein Bogy Praktikum an der Universität Konstanz in der AG Life Science Informatics von Prof. Falk Schreiber.

Hier ist sein Bericht:

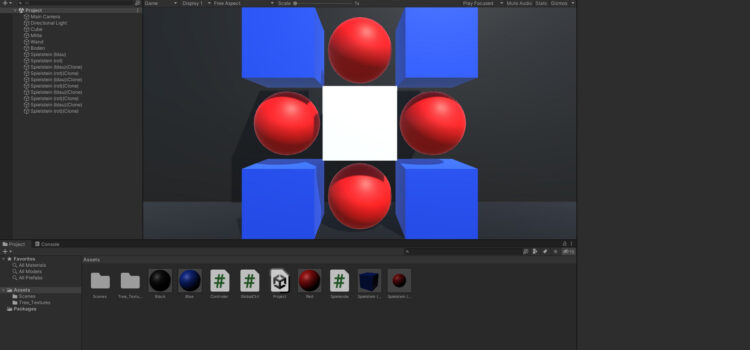

Als Schüler der 9. Und 10. Klasse haben wir uns entschieden, einen Einblick in die Welt der Technik und Visualisierung zu gewinnen. Was könnte dafür besser geeignet sein als ein BOGY Praktikum am VISUS an der Universität Stuttgart?

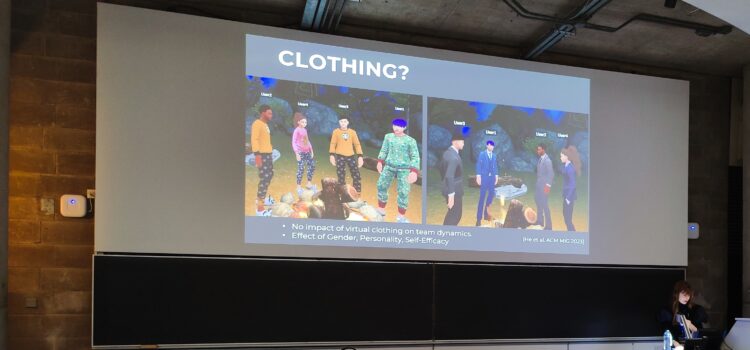

Wilhelm Kerle-Malcharek (D04) visited the ICAT-EGVE 2023, the International Conference on Artificial Reality and Telexistence & Eurographics Symposium on Virtual Environments from December 6th – 8th, 2023 in Dublin.

Vom 23. bis 27.10.23 hatten wir – das heißt Domenic, Justus, Luka und Noemi, unsere BOGY Woche am VISUS (Visualisierungsinstitut) der Uni Stuttgart. Im Folgenden wollen wir von den Erfahrungen, die wir dort gesammelt und den Erkenntnissen, zu denen wir gekommen sind berichten.